Summary: In this post, I introduce a case that many people find intuitively compelling against aggregating wellbeing. I then reframe the same scenario that often reverses those intuitions, favoring aggregation. I argue that the different framings of the same scenario gives us good evidence that this case is an instance of a broader aggregation bias. This bias, I argue, provides positive evidence for the reliability of the pro-aggregationist framings. Finally, I show that once one accepts some conclusions of the pro-aggregationist case, that there are good decision theoretic reasons for why they should accept a stronger version.

You have two buttons in front of you, one red and one blue, and you must choose one:

The red button makes it so that all the TVs in the US turn off during the Super Bowl (for ~127 million people) just as things are heating up for 5 minutes. This makes a lot of people mildly upset.

The blue button brutally tortures one person for an hour.

You may have the intuition (and I believe this is most peoples’ knee jerk reaction) that you should click the red button. “Some slight inconvenience to many is not worth the torture of one,” you may cry out. “Have you even read the ones who walk away from Omelas???”

You may even make the further claim: “There are no amounts of small inconveniences that can aggregate into one person feeling torture.”

That’s where I’m gonna have to stop you. I used to think that this view was tenable, but I do no longer. While philosophers give different reasons for why (though this is still debated), in this post, I will give what I take to be a knock-down argument against the anti-aggregationist view of wellbeing.

The Intuition Pump

Let’s consider a specific minor inconvenience: say the feeling that the average person gets from the Super Bowl turning off in the case aforementioned. Let’s call this feeling x. While you might not say that feeling x is equivalent to a headache (as in, that you’d be indifferent between the pains associated with feeling x and the pains associated with a headache), you would probably be willing to accept that there are some amount of instances of x such that you’d be willing to endure a 5 minute headache instead — let’s call this quantity q.

On top of this, you’d probably also say that there are some amount of 5 minute headaches such that you’d rather endure a 3 hour headache (probably >36 because 3 (hours) * 60 (minutes in an hour) = 5 (minutes in the headache) * 36). In addition, there are probably some amount, y, of 3 hour headaches such that you’d be willing to trade for 5 seconds of migraine. There are z instances of 5 seconds of migraines you’d be willing to trade for 3 hours of migraines. There are some sessions of 3 hour migraines (let’s call this number g) that you’d rather endure over getting tortured for 5 seconds. There are some number of 5 seconds of torture sessions that you’d endure over 3 hours of torture. Lastly, there are some 3 hours of torture sessions, call this t, that you’d endure rather than having your life (at the very high end, one should accept death over torture lasting the rest of their life — as one should clearly prefer death over torture for the rest of their life!).

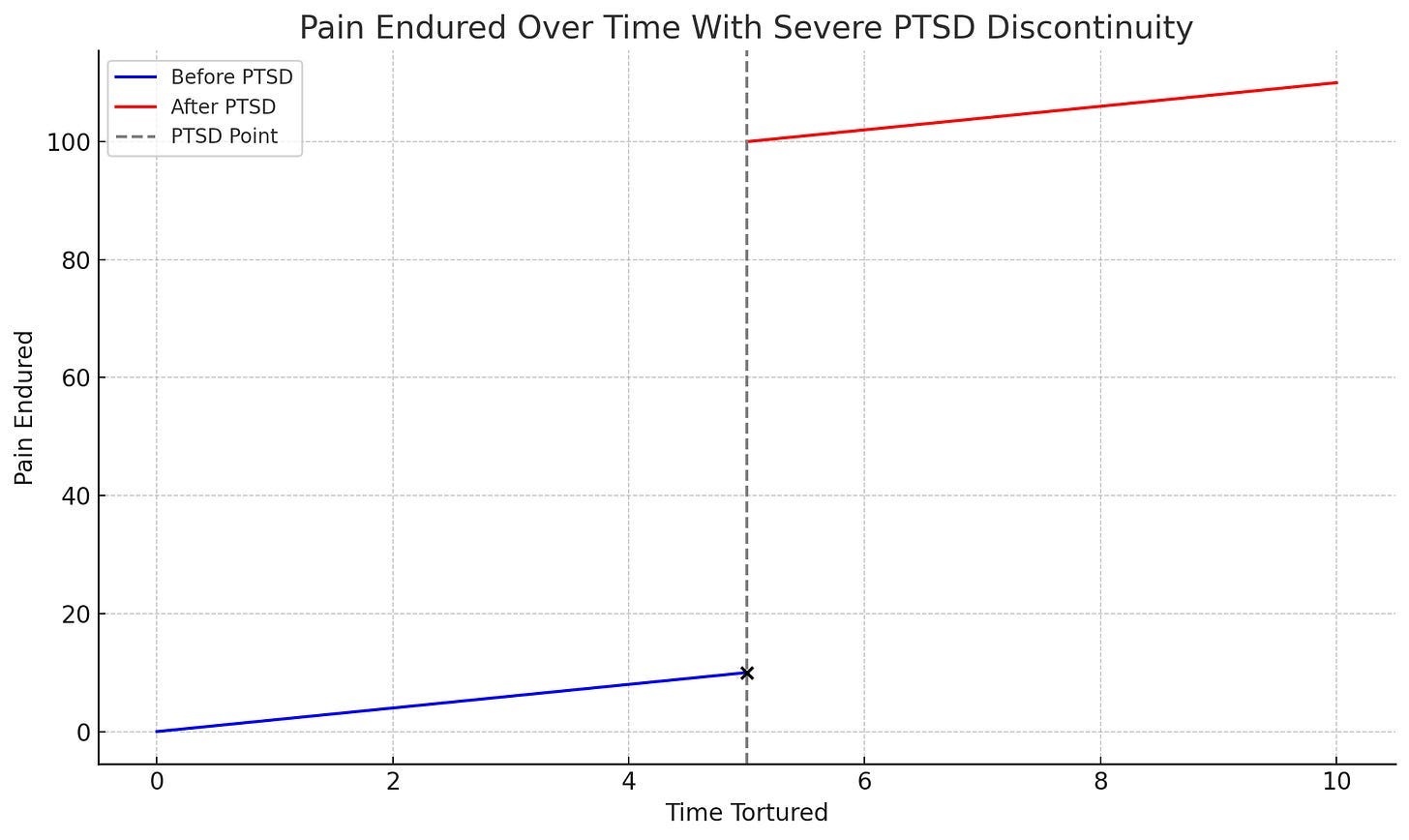

This even works if one of the steps is non-linear. For instance, say there was some level of torture where you get PTSD such that the graph goes like this:

Even in that case, you’d see the jump in pain endured and regularize it to the amount of torture time you’d be indifferent to enduring in its place.

In order to add up how many 5 minute headaches that you’d be willing to trade for death, then, you’d just multiply the amount needed to get to the next level. For instance, if you need 10 x’s (whereas x is the feeling you get from the Super Bowl being turned off) to get a q (the amount of x’s you’d endure for a 5 minute headache) and 36 q’s to get a 3 hour headache, you’d need 360 x’s to get a 3 hour headache.

In the situation without the discontinuity, you’d do: x * q * 36 * y * z * g * t = the amount of feeling x’s you’d be willing to endure to be indifferent to it and death! Yay!

And here’s a tip: If you’re making this argument to someone, and they’re not budging, make the difference between the levels smaller. From my experience, this argument lands best if the amounts between different experiences of pain are very small.

And the same argument holds for joy! While some say that there is nothing that they’d trade off for love, the same framing effects occur here. Let’s use the same method to see why:

There are some lollipop licks you’d trade for a brownie. Some amount of tastes of a brownie (over a period of time - at some point, you’d probably face diminishing returns — but this doesn’t cause any issues as long as you allow for the lollipops to be taken over a sufficiently long period of time) you’d trade for a really good brownie. Some really good brownies over some time for a good TV show. Some good shows for a nice compliment. Some compliments for a moment of nostalgia. Some moments of nostalgia for some amount of time feeling genuinely understood…

As you can see, even things that feel incommensurable start to feel exchangeable once we slowly increase the levels. There is a question here about why people have a different reaction in the cases where the things are very far (lollipop licks to feeling genuinely understood), and we will get to that in the error theory section.

While you can have intuitions in both directions, the question now is which of these, if any, are more reliable? Is there anything that can be said to make one rely on pro-aggregationist vs anti-aggregationist intuitions?

I think yes!

An Error Theory

I think this case exemplifies a broader aggregation bias—a tendency to systematically misjudge situations that involve summarizing complex information into a single value. This is supported by the fact that many known cognitive biases arise in contexts involving both (a) aggregation and (b) vulnerability to framing effects. The recurrence of both features across multiple biased cases would be highly surprising if they were not driven by a common mechanism. Given this pattern, then, we should update significantly toward the hypothesis that this case is another instance of that same aggregation bias.

Consider a case from this post about scope insensitivity in ethics:

Once upon a time, three groups of subjects were asked how much they would pay to save 2,000 / 20,000 / 200,000 migrating birds from drowning in uncovered oil ponds. The groups respectively answered $80, $78, and $88. This is scope insensitivity or scope neglect: the number of birds saved—the scope of the altruistic action—had little effect on willingness to pay.

Consider this general case of dealing with big numbers outside of values or morals:

Dehaene (2003) found that the brain’s “number sense” is logarithmic, not linear—meaning we see differences between 1 and 10 more strongly than between 1,000 and 1,010. This makes it harder to appreciate large absolute (as opposed to relative) differences once numbers grow past a certain scale.

For instance, one might be indifferent between spending $10,000 and $10,500 on a wedding, even though the $500 could have been meaningfully different in another case because the numbers feel equally big in scale.

Consider this case of aggregation:

People consistently underestimate large crowd sizes, especially when viewing from the ground or a limited vantage point. Studies like Paul et al., 2016 show that even trained observers can be off by orders of magnitude, largely because the brain can't aggregate large numbers of discrete individuals without external tools or overhead perspective. Instead, we rely on heuristics like “density in a small area × guessed area size,” which often breaks down with scale.

Now we have a better understanding of the mechanism behind why people tend to give different answers in different cases: it seems to be a general instance of this aggregation bias we experience in general.

“Okay,” you might say; “but we still haven’t shown which of the procedures is more subject to this bias, so we have no reason to think that the pro-aggregationist or anti-aggregationist rule is more reliable.”

I think that we actually do have an idea!

Which Way Does The Bias Go?

I claim that there is still stronger evidence to show that the anti-aggregationist intuition is more biased. This is because people are more accurate when situations are presented to them with the framings associated with the pro-aggregationist position in the other cases of bias. Let’s go through an example:

If you asked someone to guess the size of the crowd, as stated, they would likely be very inaccurate. However, if you started from a small part of the crowd, and then slowly added more people, one is much more likely to guess the answer accurately. This is called grouptizing and is often seen as a debiasing technique in these types of cases – a way to make oneself systematically more accurate in cases where humans are often not. There are various psychological reasons for why people get better at estimation using this method, but that’s beyond the scope of this piece.

In addition, I would suspect (though, to be fair, there is no psychological literature to back this up) that the bias would at least start to dissipate in the moral case if you used this same debiasing technique. Part of this is a very easy claim to make: most clearly wouldn’t pay less to save 20,000 birds than 2,000 birds if they were asked both sequentially – even though they sometimes do when asked separately! There is another (more difficult, but in my view, reasonable) claim that they would also likely start being more linear in their willingness to pay as they are asked questions with larger and larger scope.

It seems, then, that our intuitions become more accurate as we groupitize in these cases – or add the values up slowly and sequentially. This sounds pretty similar to the case we had initially; the one in which you started from some lower pains or pleasures (i.e. the annoyance of someone turning off the superbowl) to medium pains (headaches/ migraines) to higher pains (torture and even death).

From here, we can say that we’ve accepted two premises: (1) the two cases (of general aggregation and value aggregation) experience the same mechanism of bias, and (2) that the framings that tend to increase accuracy are more similar to the value-aggregation cases where intuitions tend to be more pro-aggregationist.

Bayes’ Theorem tells us that if some piece of evidence is weird and surprising under one hypothesis and makes more sense under a different hypothesis, we should update towards the hypothesis that makes more sense of it. In this case, it would be weird and surprising if the same biasing mechanism occurred in both cases and the framing effects that led you towards the wrong conclusion in one case (i.e. guessing the crowd size without groupitizing) actually led you to the correct conclusion in the other (i.e. have you evaluate whether there could be any small inconveniences without groupitizing/ incrementally increasing the pains).

Therefore, one should conclude that their knee-jerk anti-aggregationist intuitions (and the framings associated with them) are unreliable. We can indeed aggregate wellbeing!

But you might still fuss about it: “What if I’m willing to endorse all of the trades from one sequence to the next (i.e. from Super Bowl pain x to 3 hour long headaches), but I just stop at some level when dealing with the trades from much lower to much higher pains (i.e. from some amounts of Super Bowl pain x to torture)?”

This, I argue, probably gets you way more trouble than you’re willing to endure (even for some finite n amount of headaches — *ba dum tsss*).

Increments Without Total Aggregation?

Let’s express the view you’re offering in the abstract:

“There are some x’s such that I would trade an amount (lets call this a) of them for y. There are some y’s such that I would trade an amount (let’s call this b) of them for z. However, there is no amount of x’s that I would trade for z.”

This breaks transitivity (an axiom widely used in economics and other disciplines involving values), which leads to all sorts of fun outcomes (reader discretion: that was a joke - the outcomes are, in fact, not very fun). Here are a few:

If you reject transitivity, you can get money pumped; this literally means that you will willingly give someone all of your money if they give you a certain series of trades. Consider the following case:

You prefer A > B, B > C, but C > A (i.e. you break transitivity). Let’s say that between each of these is a value of some number greater than a teeny tiny number x, and that there’s a savvy better that really likes having all of your money for free (let’s call him Noah 😉). Noah can steal… I mean sell you his A for your B plus that tiny $x. Then, he can sell you his fresh and new B for your C and another $x. He can also sell his C back to you for his initial A in addition to another $x. Now he has his initial A and 3 * $x; in other words, you willingly gave him money for free (the 3 * $x). Noah can literally do this infinitely (or, at least, until you lose all your money/ utility).

This, I (and most decision theorists) argue, is a bad outcome that you shouldn’t accept. There are two ways of seeing why. The first is simple: a mere note that losing all your money is clearly irrational (which implies that having an intransitive structure to your preferences is also irrational). The second is that, while you may not actually lose all your money in the real world (not all people are as betting savvy as Noah, and people don’t often ask you for trades in hopes that you are intransitive), there’s something wrong with your preferences, in principle, because you’re leaving this hypothetical door open.

But it’s not over yet! There are more awful consequences that result from this weird preference ordering.

No consistent maximization:

In addition to literally losing all your money (ouch), there is also no utility function that can represent your preferences (because utility functions require transitivity). This means that you can’t maximize your utility.

Okay, I just said a bunch of stuff, but what does that mean in the real world?

Fair question. I won’t go into everything (it’s complicated, and I don’t know how it all works), but one thing is that you don’t accept the axioms required for expected value (or EV) calculations, the most standard decision making procedure for acting under uncertainty. While you can still use some other decision rules that approximate EV, in those cases, you’re going to get some outcomes that seem pretty weird and irrational (like overruling the intransitive preferences you initially stated that you had).

No best options:

In many situations, there’s no best option for what you should do - this leads you with endless cycles between different options (A to B to C and back to A). Not only does this sound extremely dizzying, but it leaves you off with a lot of indecisiveness. This is no way a rational agent should live their life like this!

A Weaker Version?

Even if you accept some weaker version of the anti-aggregationist view (where you merely refuse multiply m * n to get the trade-off between x and z if m amount of x’s = y and n amount of y’s = z), you still get weird outcomes (ones that are close to but not as bad as the previous full rejection of transitivity).

Irreversibility (if you promise me you won’t break transitivity or the property explained above, you can skip to the section titled "Explanations for the Bias”)

You might trade some things and not want to get what you traded for back. For instance:

Imagine you fairly trade 100x for 10y. Then, you trade 10y for one z. However, you’d refuse to trade a z for any amount of x. This becomes especially painful in policy, when

More Money Pumps:

While you’re not subject to normal money pumps, you’re still subject to some quasi-money pumps that are taken in the form of bundles. For instance, suppose your exchange rates are non-linear:

You’re willing to pay 10x for 1y

And 10y for 1z

But only willing to trade 200x for 1z, not 100

A clever agent (Noah’s back) can:

Break z into 10y

Sell each y to you for 10x (10 × 10 = 100x)

Then bundle z back and offer you to reverse the trade at 200x

Once again, you lost all your money for literally no gain; seems pretty bad, right? There are more weird things that this implies, but I won’t get into them because you probably already get it, are bored, and are ready for this post to be over.

Explanations for the Bias

Now that I’ve convinced you that anti-aggregationist intuitions are merely biased, we can do a bit of fun speculation about why these biases exist in the first place. If you are so inclined, and if you believe that these are good explanations of the bias, you should update even stronger in the direction of thinking that anti-aggregationist intuitions are biased!

You might now be inclined to think that you shouldn’t be anti-aggregationist and maybe even that you shouldn’t be non-linear about your aggregation. You might then ask: “What is going wrong with our intuitions?” I think there are a few reasonable answers here.

Unnecessary – Historically (in our hunter gatherer environments), we didn’t need to aggregate many instances of small amounts of pain to large amounts of pain. Because this is a tricky and computationally expensive algorithm to learn and results in little evolutionary benefit (as we barely, if ever, had to do it), our brains wouldn’t have learned to do it. This is because of a cognitive phenomena called resource rational analysis – where your brain only learns more accurate algorithms if they have a net positive effect in the trade-off between complexity (the cost) and usefulness (the benefit).

Status – there is status around not consequentializing things. To say that some things are morally sacred and cannot be broken down into parts, in some circles at least, is seen as principled and loyal. Therefore, you have incentives to say that it cannot be broken down, even if they can’t be. Interestingly, this theory would predict that while you might say this in theory you probably wouldn’t act this way in practice (as is usually the case with things that were developed for signalling reasons)– though this is hard to test for due to reasonable ethical constraints.

As always, tell me why I’m wrong!

I don’t disagree with your headache example. The difference between it and your initial example (mild discomfort of many distinct beings weighed against the torture of one) is that in your initial example the discomfort of many is experienced only discretely. No one receives more than one dose of mild discomfort. There is no being who experiences the total discomfort of all the tvs being turned off. This is why I believe one person’s torture is still worse than everyone’s mild discomfort.

In your headache example, and subsequent examples, you aggregate all the discomforts in a single individual, who does bear the full weight of the aggregated discomfort.

These two examples are apples and oranges to me.

You've convinced me that you can aggregate utility within people, and that people are bad at estimating crowd sizes, but I am not convinced you can aggregate utility across people in the way you are describing. Woolery said it best: the intuition against aggregating this way comes from the fact that we are comparing experiences here, and no single individual feels the sum of all the inconveniences of having the super bowl shut off.

Also, why is the best aggregation function automatically summation? I think comparing average utility between groups very easily gets you to prefer saving the one person from being tortured. And it preserves a justifiable focus on experience.

Great post! Always looking to be challenged on my skepticism about aggregating utility.